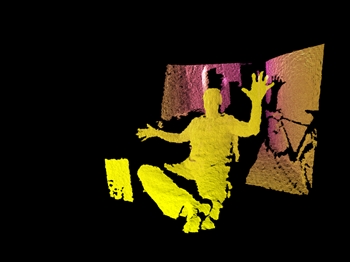

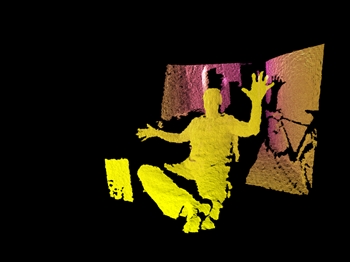

Pictured: The SDK block generates a point cloud without traversing the depth image THIS BLOCK HAS BEEN REPLACED BY THE KINECT COMMON BRIDGE : http://bantherewind.com/kinect-common-bridge Version 1.2.1 of the Kinect SDK block for Cinder is the result of a mission to make a rock-solid, high performance, easy to use interface to this device. Point clouds can be generated without reading the depth image. Data is now received using a reliable update/callback system. Skeletons contain more data and can be rendered with a fraction of the code from previous versions. Initializing and reading multiple devices is a breeze, as is mapping skeleton data to video or depth images. Subtle details like seated mode, near mode, and optional verbose error reporting take further advantage of the Kinect's capabilities while making it easier to use. This block is meant to provide easy and reliable access to the device for both the tinkerer who just wants to prototype ideas and the professional who needs their Kinect(s) to run around the clock. Pictured: The SDK block provides access to multiple devices, camera tilt, and more GET KINECTSDK FOR CINDER ON GITHUB BLOCK + INSTALLATION Follow these easy steps to install: Download and install the official SDK and drivers here Clone the KinectSdk block from GitHub Unzip the contents into your main Cinder path, merging it with your "blocks" folder Go to "cinder/blocks/KinectSDK/samples/KinectApp/vc10" and open "KinectApp.sln" with Visual Studio C++ Express or Visual Studio 2010 Build and run IMPORTANT! Do NOT move any of the SDK's files into the sample projects. The projects rely on a properly installed Kinect SDK to work. To create your own project, I recommend starting with the KinectApp sample and then build out from there. This will save you the work of trying to set up linking, DLL copying, etc. CHANGE LOG 1.2.3: Fixed getDepthAt range -- is always 0 - 1 now 1.2.2: Dropped template argument from add Type Callback() method 1.2.2: Removed second add Type Callback() signature 1.2.1: Added ContoursApp sample 1.2.1: Added DeviceOptions::enableUserTracking() to toggle user index flag (set to false when using multiple devices) 1.2.0: Switched to callback system for reduced CPU and improved stability 1.1.9: Works with Kinect SDK 1.5.0 1.1.9: New sample app demonstrating how to map skeletons to color and depth image 1.1.9: New sample app demonstrating how to run up to eight concurrent devices 1.1.9: Improved depth accuracy 1.1.9: New method to read depth values as float values from 0.0 (near) to 1.0 (far), without copying or referencing depth image 1.1.9: Helper methods to align skeleton positions to depth and color images 1.1.9: Query device index and unique ID 1.1.9: Initialize device by unique ID (ensures device order when using multiple Kinects) 1.1.9: Improved frame rate 1.1.9: Improved stability 1.1.9: New user colors 1.1.9: New depth colors -- the red channel always contains the raw depth value and values are ordered from 0 (near) to 4096 (far) 1.1.9: Better support for high resolution modes 1.1.9: Smarter initialization 1.1.9: Verbose error reporting 1.1.9: Visual Studio solution for all projects (VS10 Pro+ only) 1.1.9: Depth image surface channel order is now RGB, not RGBA (alpha was unused) 1.1.9: Handles physical disconnections / reconnections (read note below) 1.1.9: Better, VS10/VS Assist-friendly inline documentation 1.1.3: Updated to work with Kinect SDK 1.0.3.191 1.1.3: Update for use with Cinder 0.8.4 1.1.2: Reduced CPU usage 1.1.2: Fixed an issue where low frame rates occurred when getting user count 1.1.1: Added "near mode" 1.1.1: Improved threading 1.1.0: Added support for stable SDK 1.0 1.1.0: Added static library, implemented in samples 1.1.0: Added "MeshApp" sample 1.0.0: Works with the new Kinect SDK 1.0 beta 2 1.0.0: Support for up to eight connected devices 1.0.0: Camera tilt 1.0.0: Greyscale mode 1.0.0: Improved performance and stability 1.0.0: Added PointCloudGpu sample application 1.0.0: Block arranged to meet new CinderBlock guidelines and improve portability 1.0.0: Includes improved AudioInput class for Windows 1.0.0: Block and samples exit with zero memory remaining 1.0.0: Fixed bug with high resolution modes Read about 0.0.x version here . ON MULTIPLE DEVICES A Kinect uses 61% of the bandwidth of a USB 2.0 controller. That may seem high, but note that each device contains a 1280x960 RGB camera, a 640x480 monochrome camera, a soundcard with multiple microphones, and two motors (neck and IR emitter). That means that you need a separate controller for each device. Before you get frustrated with your additional device not working, make sure you have the bandwidth to support it.